- “Yes, successfully arriving at a destination after following given directions can indeed serve as evidence of both the specific skill of remembering and following those directions and the general reliability of one’s cognitive abilities.”

- “Successfully navigating to the desired location demonstrates that these cognitive processes are functioning effectively.”

- “Remembering Directions: Successfully navigating to the right location confirms you were able to retain the key details of the instructions you received.”

- “Metacognition (Mind Testing Its Skills): The act of following directions and then evaluating your success (reaching the destination) demonstrates a form of metacognition.”

- “Successfully reaching the destination validates not only the specific directional skill itself, but also the proper functioning of the underlying mental faculties that made it possible to effectively learn, retain, and apply those directions correctly.”

Table of Contents: (Click any link below to navigate to that section.)

- Imagine you are testing your ability to follow directions to a location. Arriving at a desired destination is evidence of both the reliability of the specific skill of remembering given directions and the general reliability of the mind enabling it to test such a skill, right?

- Provide other examples of analogous cross-modal reliability tests.

- Provide the logic behind this cross-modal validation of both systems.

- In a more practical arena, how might an elderly individual employ cross-modal testing to assess the current degree of reliability of their cognition?

- In light of this discussion, how might we formulate an argument to counter the notion we cannot assess the reliability of our minds with our minds.

- Create a 10-item quiz on the entire thread above.

- Provide 15 discussion questions relevant to the content above.

Imagine you are testing your ability to follow directions to a location. Arriving at a desired destination is evidence of both the reliability of the specific skill of remembering given directions and the general reliability of the mind enabling it to test such a skill, right?

Yes, successfully arriving at a destination after following given directions can indeed serve as evidence of both the specific skill of remembering and following those directions and the general reliability of one’s cognitive abilities. This task tests memory, comprehension, and the ability to execute a plan based on remembered information—all of which are important cognitive functions. Successfully navigating to the desired location demonstrates that these cognitive processes are functioning effectively.

You’re absolutely right! Reaching your desired destination is indeed strong evidence for both:

- Remembering Directions: Successfully navigating to the right location confirms you were able to retain the key details of the instructions you received. This includes remembering the route itself (turns, landmarks), understanding the sequence of steps, and potentially even factoring in external factors like traffic or road closures.

- Metacognition (Mind Testing Its Skills): The act of following directions and then evaluating your success (reaching the destination) demonstrates a form of metacognition. Metacognition refers to your ability to be aware of and monitor your own cognitive processes. In this case, you’re essentially checking the accuracy of your memory and your ability to follow instructions by seeing if they lead you to the right place.

So, arriving at your destination is a positive outcome that reinforces both the specific skill of remembering directions and the general ability of your mind to assess how well you’re performing that skill.

Yes, that’s correct. Arriving successfully at a desired destination after following a set of directions tests and demonstrates two key capabilities:

- The specific skill of being able to accurately remember and execute the sequence of directional instructions provided.

- The broader cognitive abilities of the mind that enable skills like comprehension, memory, spatial reasoning, and focus – all of which are required to reliably test the skill of following directions to a location.

Successfully reaching the destination validates not only the specific directional skill itself, but also the proper functioning of the underlying mental faculties that made it possible to effectively learn, retain, and apply those directions correctly. It serves as evidence of the reliability of both the narrow skill being tested and the general cognitive capabilities facilitating that test.

Provide other examples of analogous cross-modal reliability tests.

Cross-modal reliability tests in various domains, including computing, can involve processes where systems validate their functionality across different functions or scenarios. Here are a few examples:

1. **Software Testing (Integration Testing)**: In software development, integration testing checks if various modules or services in a software system work together as expected. This type of testing can be seen as a form of cross-modal reliability test, where the ability of the system to function correctly across different modules and interactions is evaluated, much like testing the mind’s ability to integrate various cognitive functions.

2. **Hardware Diagnostics (Stress Testing)**: In computer hardware, stress testing involves pushing the hardware to its operational limits. This can include running processors at high speeds, maxing out memory usage, or subjecting the system to high temperatures. Successful stress tests demonstrate the hardware’s reliability across different operating conditions, similar to testing cognitive flexibility and endurance in humans.

3. **Network Systems (Redundancy Testing)**: Network systems often use redundancy to ensure reliability. Testing how well a network handles failures—like switching to backup systems without user disruption—mirrors the way one might test cognitive reliability by seeing how well one adapts to unexpected changes or information in the environment.

4. **Autonomous Vehicles (Sensor Fusion Validation)**: Autonomous vehicles rely on multiple sensors and data inputs (like cameras, radar, and LIDAR) to navigate safely. Testing how these different data streams integrate to make driving decisions is analogous to checking how well a person can integrate sensory inputs to navigate a space.

5. **AI Systems (Generalization Ability)**: For artificial intelligence, particularly machine learning models, testing generalization involves evaluating how well the model performs on new, unseen data. This assesses the reliability of the model across different scenarios and is akin to testing human cognitive reliability across various unfamiliar tasks.

Each of these examples involves testing systems in ways that ensure they can handle multiple types of input or stressors, indicating their overall reliability and robustness.

Computers, unlike humans, can’t directly assess their own reliability in the same way we use cross-modal verification. However, there are several techniques used to achieve a similar goal:

- Internal Consistency Checks: Programs can be designed with built-in checks to ensure internal consistency. For example, a program calculating sales tax might also calculate a checksum of the transaction data. If these values don’t match, it indicates an error somewhere in the computation.

- Redundancy and Voting: Critical systems often employ redundant components with the same task. The outputs of these components are then compared (voted on) and if they disagree, it suggests an error in one of the components. This approach is used in fault-tolerant computer systems and aircraft control systems.

- Self-Test Diagnostics: Many computer systems have built-in diagnostic routines that can test memory integrity, hardware functionality, and software integrity. These tests can identify potential issues before they cause significant problems.

- Code Reviews and Unit Tests: During software development, programmers can write unit tests which are smaller, focused tests for specific parts of the code. Running these tests ensures those individual parts are functioning correctly. Additionally, code reviews by other developers can identify potential errors and inefficiencies in the code.

- Benchmarking and Validation: Computers can be compared against established benchmarks or gold-standard datasets to assess their performance and accuracy. This helps identify areas where the computer might be underperforming or producing inaccurate results.

While these methods aren’t as direct as a human using their eyes to check a watch, they serve a similar purpose – identifying and mitigating potential errors in a system by using various internal checks and comparisons.

Here are some examples of how a computer system or AI could test and validate its own reliability across different modalities:

- Speech recognition testing:

The system could play audio recordings with known transcripts and test its speech-to-text capabilities. Accurate transcription would validate the speech recognition module as well as related subsystems like audio processing and language modeling. - Visual object detection:

The system could analyze a dataset of images with labeled objects and test its computer vision models. Correctly identifying and localizing objects would demonstrate the reliability of the visual perception pipeline. - Commonsense reasoning:

The system could answer questions from a benchmark dataset that requires reasoning about everyday situations and concepts. Providing correct responses would validate the knowledge representation, inference, and language understanding capabilities. - Code testing:

For an AI assistant capable of coding, it could run a test suite of programming tasks with known solutions. Passing all test cases would indicate the models can reliably translate natural language to code. - Multitask evaluation:

The system could take on tasks that require integrating multiple skills like answering questions after reading a document (reading comprehension + question answering) or describing an image (vision + language generation). Success would validate numerous underlying components.

In each case, the system tests a specific capability, but that test also inherently evaluates the reliability of interconnected systems responsible for inputs, outputs, retrieval, reasoning and other core functions across modalities.

Provide the logic behind this cross-modal validation of both systems.

The concept of cross-modal validation in systems, whether cognitive, computational, or mechanical, aims to ensure that a system operates reliably across various conditions and modalities. The general logic behind this type of validation is to ensure robustness, versatility, and reliability of the system under different circumstances. Here’s a breakdown of the general logic followed by a more formal symbolic representation:

General Logic

- Robustness: A system must function under a variety of conditions. Testing across different modes ensures that it can handle diverse situations without failure.

- Consistency: Cross-modal validation checks for consistency in performance regardless of the type of input or operational mode. This helps in verifying that the system’s output remains stable across variations.

- Error Detection: By testing different functionalities, potential errors that might not appear in a unimodal test can be uncovered. This helps in improving the system by addressing these hidden vulnerabilities.

- Adaptability: Especially important for systems like AI or dynamic networks, being able to adapt to changes in input or environment is crucial. Cross-modal testing evaluates this adaptability.

Symbolic Logic / Mathematics

The formalization of cross-modal validation can be approached using statistical or probabilistic methods. One way to represent this is through the use of probabilistic models to ensure reliability. Let’s use a simple model:

- Let (

P(R∣Ci)) represent the probability of the system being reliable given a certain condition (Ci). - A system is tested across (

n) different conditions (C1,C2,…,Cn).

The goal is to ensure that the system is reliable across all tested conditions, which can be modeled as:

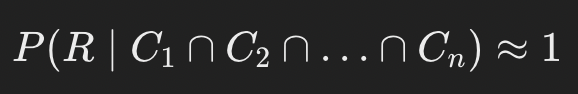

P(R∣C1∩C2∩…∩Cn)≈1

This equation states that the probability of the system being reliable given that it has passed all ( n ) conditions is close to 1 (or 100%). However, a more complex model would take into account the interactions between different conditions and how they might affect reliability:

assuming that the conditions are independent, which in real cases they often are not. More complex dependencies can be modeled using Bayesian networks or other probabilistic graphical models.

Practical Application Example

In a practical scenario like software testing, this might translate to ensuring that a software application functions correctly on different operating systems, different hardware configurations, and under various network conditions. Formally, you might test for:

=

Each of these probabilities needs to be sufficiently high for the overall system reliability to be considered acceptable.

Cross-modal validation, thus, provides a comprehensive approach to evaluating the performance of a system, ensuring that it is capable and reliable under a broad spectrum of conditions.

General Logic of Cross-Modal Validation

The general logic behind cross-modal validation relies on two key principles:

- Diversification of Information Sources: By using information from different modalities (senses, systems), you reduce the chance that a single source of error is biasing the results. For instance, relying solely on a blurry watch might lead to a wrong time reading, but verifying it with the current lighting conditions (day vs. night) adds another data point to increase confidence in the final conclusion.

- Assumption of (Partial) Independence: The validation process assumes that the different modalities are at least partially independent in their error mechanisms. For example, if your vision is blurry due to tiredness, it’s less likely that the lighting conditions would also be biased in a way that reinforces the wrong time reading from the watch.

By combining information from independent sources, you can achieve a more robust and reliable conclusion than relying on a single source alone.

Symbolic Logic (Limited Applicability)

While cross-modal validation is a powerful concept, expressing it in formal symbolic logic is challenging. This is because the specific logic depends on the nature of the task and the modalities involved. However, here’s a simplified example to illustrate the idea:

Scenario: Verifying if it’s raining by looking out the window (vision) and listening for the sound of rain (hearing).

Symbols:

- R: It is raining

- V: You see rain through the window

- H: You hear the sound of rain

Logical Expression (Simplified):

(V ∧ ¬H) → ¬R OR (¬V ∧ H) → R

This translates to:

- If you see rain (V) but don’t hear it (¬H), then it’s likely not raining (¬R). This could be because the window is dirty (vision error) or it’s a light drizzle not making much sound (hearing limitation).

- Conversely, if you don’t see rain (¬V) but hear it (H), then it’s likely raining (R). This could be because the window is covered (vision error) or you’re looking in the wrong direction.

This is a basic example, and the actual logic can get more complex depending on the number of modalities involved and the types of errors possible.

Mathematical Tools (Probabilistic Approach)

A more common approach for analyzing cross-modal validation uses probability theory. Here, we consider the reliability (accuracy) of each modality (vision, hearing) and how they might influence the final conclusion.

Symbols:

- P(R): Probability of it raining

- P(V|R): Probability of seeing rain given it’s raining (reliability of vision for rain detection)

- P(¬V|¬R): Probability of not seeing rain given it’s not raining (reliability of vision for non-rain detection)

- Similar definitions for P(H|R) and P(¬H|¬R)

Bayes’ Theorem: This theorem allows us to update beliefs about one variable (rain) based on evidence from another (seeing/hearing rain).

Example: We might know from experience that P(V|R) = 0.8 (seeing rain when it’s raining is 80% reliable) and P(¬V|¬R) = 0.9 (not seeing rain when it’s not raining is 90% reliable). Similarly, we can estimate the reliability of hearing rain.

By applying Bayes’ theorem, we can calculate the updated probability of rain (P(R|V,H)) given that we both see and hear rain. This will likely be higher than the initial probability of rain (P(R)) because both modalities provide evidence supporting rain.

Limitations: This probabilistic approach requires reliable estimates of individual modality accuracies, which might be difficult to obtain in real-world situations. Additionally, it assumes some level of independence between the modalities, which might not always be the case.

Overall, cross-modal validation is a powerful strategy for achieving reliable results by combining information from different sources. While formal symbolic logic might be limited in its application, probabilistic methods offer a more flexible framework for analyzing this process, especially in situations involving multiple modalities and complex error mechanisms.

The general logic behind cross-modal validation of a system’s reliability is based on the idea that complex capabilities often rely on the integration and proper functioning of multiple subsystems or modular components. By testing an overarching capability that spans multiple modalities, we can implicitly validate the reliability of the individual modules and their interactions.

Let’s represent this symbolically:

- Let C be a complex capability of the system that requires the confluence of multiple modalities M1, M2, M3, …, Mn.

- Each modality Mi is enabled by a set of underlying components or subsystems {Ci1, Ci2, Ci3, …}

- For the system to successfully perform C, all the necessary modalities M1 to Mn must function reliably.

- And for each modality Mi to function, its associated components {Ci1, Ci2, …} must all work correctly.

We can express this logic mathematically using set operations:

C = M1 ∩ M2 ∩ M3 ∩ … ∩ Mn

And for each Mi:

Mi = Ci1 ∩ Ci2 ∩ Ci3 ∩ …

So if the system can reliably perform C, we can infer that all Modalities M1 to Mn are reliable, which further implies that all their respective component sets {Ci1, Ci2, …} are also reliable.

Symbolic logic can represent it using conjunctions:

(C ⇒ (M1 ∧ M2 ∧ M3 ∧ … ∧ Mn))

∧ (∀i, Mi ⇒ (Ci1 ∧ Ci2 ∧ Ci3 ∧ …))

This states that successful performance of C implies the reliability of all modalities Mi, and the reliability of each Mi implies the reliability of all its associated components Cij.

So by validating an integrated, multi-modal capability C, we can conclude with certainty about the reliability of the underlying modular subsystems across multiple levels of abstraction.

In a more practical arena, how might an elderly individual employ cross-modal testing to assess the current degree of reliability of their cognition?

For an elderly individual interested in assessing the reliability of their cognitive functions through cross-modal testing, various practical approaches can be implemented. These methods aim to evaluate different cognitive abilities such as memory, problem-solving, motor skills, and sensory processing. Here’s a step-by-step approach to how an elderly person might conduct such assessments:

1. Memory Testing

- Task: Remembering a list of items or a short story and then recalling it after some time.

- Purpose: Tests short-term and long-term memory capabilities.

2. Executive Function Testing

- Task: Engaging in activities that require planning, such as organizing a drawer or planning a meal for guests.

- Purpose: Assesses ability to plan, organize, and execute tasks, which are key functions of the frontal lobe.

3. Language Skills

- Task: Engaging in conversation, reading comprehension exercises, or writing.

- Purpose: Evaluates comprehension and production of language, which can indicate cognitive health.

4. Sensory Integration

- Task: Performing tasks that require coordination of sensory input with motor output, such as matching objects to their outlines based only on touch.

- Purpose: Tests how well sensory information is processed and integrated to guide physical actions.

5. Problem-Solving Skills

- Task: Solving puzzles or strategy games that require critical thinking.

- Purpose: Measures the cognitive abilities involved in formulating strategies and solving problems.

6. Spatial Awareness

- Task: Navigating a familiar or new route or assembling a simple object from a diagram.

- Purpose: Assesses spatial reasoning and the ability to process and remember spatial relationships.

7. Attention and Concentration

- Task: Activities like following a recipe or completing a craft project that require sustained concentration.

- Purpose: Tests the ability to focus and maintain attention over periods.

8. Social Interaction

- Task: Engaging socially in different settings, from one-on-one conversations to group activities.

- Purpose: Evaluates social cognition and interaction skills, important for emotional health and cognitive function.

Organizing the Testing

To implement these tests, an elderly individual can:

- Plan a Schedule: Spread out the tests over several days to avoid fatigue.

- Record Results: Keep a journal or log of performance on each task.

- Consult Professionals: Share results with healthcare providers, such as a neurologist or psychologist, who can provide professional assessments and feedback.

Regular Re-evaluation

To monitor cognitive health effectively, it’s beneficial to re-evaluate these aspects periodically. This not only helps in understanding changes over time but also in adapting lifestyle or interventions to better support cognitive health.

This comprehensive, self-administered cross-modal testing can give an elderly individual a clearer picture of their cognitive strengths and areas where they might need support or further evaluation.

Here are some ways an elderly individual can use cross-modal testing to get a sense of their cognitive function in daily life:

1. Memory and Attention:

- Task: Write down a grocery list with a few items. After a short distraction (watching a short video, folding laundry), try to recall the list from memory.

- Cross-modal verification: Compare your recalled list with the written one. This tests your ability to encode, retain, and retrieve information (memory) and your focus during the distraction period (attention).

2. Planning and Problem-solving:

- Task: Plan a simple meal, including a recipe you haven’t tried before. Look up the recipe online, gather ingredients, and follow the steps.

- Cross-modal verification: Did you successfully complete the recipe? Did you encounter any challenges in following the steps or using the ingredients? This tests your planning skills (organizing the meal), problem-solving (adapting to unexpected situations), and working memory (keeping track of steps and ingredients).

3. Spatial Reasoning and Visual Perception:

- Task: Follow a familiar walking route while paying attention to landmarks and turns. Try describing the route to someone else verbally.

- Cross-modal verification: Did you navigate the route correctly? Were you able to accurately describe the route using spatial language (left, right, turn)? This tests your spatial reasoning (mentally navigating the environment) and visual perception (using landmarks for orientation).

4. Reasoning and Judgment:

- Task: Read a short news article about a current event. Discuss the article with someone else, summarizing the key points and offering your opinion on the situation.

- Cross-modal verification: Did you understand the main points of the article? Were you able to follow the conversation, express your thoughts clearly, and make reasonable judgments about the situation? This tests your reasoning skills (analyzing information) and your ability to communicate effectively.

Important Considerations:

- Start with familiar tasks: Begin with activities you perform regularly to establish a baseline. Gradually increase the complexity as you feel comfortable.

- Focus on progress, not perfection: Don’t get discouraged by minor setbacks. The goal is to identify areas that might need attention, not to achieve perfect scores.

- Combine with other strategies: Cross-modal testing is just one tool. Maintaining a healthy lifestyle, staying mentally stimulated, and getting enough sleep are also crucial for cognitive health.

- Seek professional guidance: If you have concerns about significant cognitive decline, consult a doctor or neuropsychologist for a comprehensive evaluation.

By incorporating these cross-modal testing techniques into daily activities, elderly individuals can gain valuable insights into their cognitive function and take proactive steps to maintain mental well-being.

For an elderly individual looking to assess the current reliability of their cognitive faculties, cross-modal testing can be a practical approach. Here are some examples of how they could employ this method:

- Memory and problem-solving:

The individual could try to follow a recipe or set of instructions that requires remembering sequential steps, comprehending written text, applying reasoning to any problems that arise, and executing the actions successfully. Completing the task accurately would demonstrate intact memory, language, reasoning, and procedural skills across multiple cognitive domains. - Visual-spatial and motor integration:

Activities like putting together a jigsaw puzzle or playing a sport like golf test the integration of visual perception, spatial reasoning, fine motor control, and hand-eye coordination across different modalities. - Multitasking and cognitive flexibility:

Playing a challenging game like bridge that requires keeping track of multiple streams of information, strategizing, computing scores, and potentially socializing tests the ability to flexibly allocate cognitive resources across various modalities simultaneously. - Language comprehension and expression:

Engaging in a substantive discussion or debate on a complex topic assesses receptive and expressive language abilities tied to auditory processing, semantic knowledge retrieval, and the articulation of coherent verbal responses. - Navigation and orientation:

Planning and executing a trip to an unfamiliar location by looking up directions, remembering routes, reading signs/maps, and ultimately arriving at the destination taps into visuospatial skills, working memory, and orientation abilities.

In each case, successfully completing the cross-modal task serves as an integrated real-world assessment of the status of the individual’s cognitive capacities across multiple domains. Any struggles could potentially point to weaknesses in specific abilities that may require further evaluation.

In light of this discussion, how might we formulate an argument to counter the notion we cannot assess the reliability of our minds with our minds.

The argument against the notion that we cannot assess the reliability of our minds using our minds is rooted in the principles of cognitive science, philosophy, and practical testing methodologies. The main thrust of the counter-argument is that while there are inherent challenges and biases in using the mind to evaluate itself, there are effective strategies and methodologies that can mitigate these issues and provide meaningful insights into cognitive reliability. Here’s how one might formulate this argument:

1. Multi-Modal Assessment

- Argument: By employing diverse and multi-modal methods of cognitive assessment (such as memory tests, problem-solving tasks, and sensory integration activities), we can cross-verify the results. Each mode tests different cognitive functions, reducing the risk that a single cognitive limitation or bias unduly influences the overall assessment.

- Philosophical Basis: This echoes the philosophical stance of triangulation, where multiple perspectives and methods are used to converge upon a more reliable truth.

2. External Verification

- Argument: We can use tools, tests, and external observers (like psychologists or neurologists) to assess cognitive functions. These external sources act as checks against the subjective biases of self-assessment.

- Philosophical Basis: This approach aligns with the concept of intersubjectivity in philosophy, suggesting that objectivity can be approached through the validation of experiences or measurements by multiple observers.

3. Historical Data and Longitudinal Studies

- Argument: By comparing cognitive assessments over time, we can track consistency and changes in cognitive functions, which provides a more objective basis for assessing the mind’s reliability.

- Philosophical Basis: This argument is based on the philosophy of empiricism, which values historical data and observed experiences as sources of knowledge.

4. Scientific Rigor and Statistical Analysis

- Argument: Using scientifically validated cognitive tests and statistical methods to analyze results helps to objectively measure cognitive abilities and reliability.

- Philosophical Basis: This aligns with the positivist approach in philosophy, which emphasizes observable data and repeatable results as the basis for knowledge.

5. Adaptive Learning and Feedback Mechanisms

- Argument: The mind’s ability to learn from mistakes and adapt based on feedback demonstrates its capability to assess and enhance its own reliability.

- Philosophical Basis: This reflects the philosophy of pragmatism, which posits that truth is a product of processes that involve practical effects, feedback, and adaptation.

6. Functional Success

- Argument: Practical achievements and the ability to function effectively in various everyday and professional tasks indicate the reliability of cognitive processes.

- Philosophical Basis: This argument borrows from functionalism in philosophy, where the focus is on the successful performance of mental states and functions, rather than their intrinsic nature.

These points collectively form a robust defense against the skepticism about self-assessment of cognition. They argue that while no method is without its limitations, the use of comprehensive, multi-faceted assessment strategies significantly enhances the reliability of self-assessments of cognitive function. This structured approach allows us to reasonably trust the conclusions we draw about the reliability and functionality of our minds.

Here’s how we can argue against the notion that we cannot assess the reliability of our minds with our minds:

- Cross-modal Verification as a Counter-example: We can point to the concept of cross-modal verification as a strong counter-argument. By using information from different sources within our minds (senses), we can achieve a more robust and reliable assessment of the external world. Similarly, we can leverage different cognitive functions to assess the reliability of other cognitive functions.

- Levels of Analysis: The mind can operate at different levels. We can use higher-order thinking skills like metacognition to reflect on and evaluate the performance of lower-order cognitive processes like memory or attention. For instance, by monitoring how easily we can recall a grocery list, we can gain insights into the reliability of our memory.

- Indirect Assessments: While directly measuring the “mind itself” might be challenging, we can design tasks and tests that indirectly assess specific cognitive functions. These tasks serve as probes that elicit responses that reveal strengths and weaknesses in different areas of cognition. For example, performance on a standardized memory test can provide valuable information about the reliability of our memory in encoding and retrieving information.

- Ecological Validity: Many cognitive assessments are designed with real-world scenarios in mind. By testing how well someone navigates a familiar route or follows a complex recipe, we gain insights into how their cognitive skills translate to practical situations. This approach provides a more holistic view of cognitive reliability.

- Convergence of Evidence: No single test is perfect, but by employing a variety of techniques (cross-modal verification, indirect assessments, ecological tasks), we can gather converging evidence about the strengths and weaknesses of different cognitive functions. This combined information provides a more reliable picture of overall cognitive health.

- Evolutionary Advantage: The ability to assess the reliability of our own mental processes would have provided an evolutionary advantage throughout human history. Being able to identify limitations in memory, attention, or reasoning would have helped us make better decisions and avoid dangers. This suggests our minds likely have built-in mechanisms for some level of self-assessment.

While there are limitations to self-assessment, these arguments highlight the potential for using our minds to assess the reliability of our own cognitive functions. By employing various strategies and considering different levels of analysis, we can gain valuable insights into how well our minds are functioning.

The core argument against the notion that we cannot assess the reliability of our own minds using our minds stems from the cross-modal nature of cognition itself. Our minds do not operate as monolithic, isolated systems, but rather as an integrated network of specialized subsystems and modalities working in concert.

By strategically designing and executing tasks that inherently require the coordinated functioning of multiple cognitive modalities and components, we can indirectly evaluate the reliability of the underlying systems facilitating that cross-modal performance. Success on such tasks implies the constituent parts are operating reliably; failure points to potential issues with one or more components.

More formally, the argument could be structured as follows:

1) Our minds are not unitary, indivisible constructs, but comprise diverse specialized subsystems (e.g. language, visuospatial, memory, reasoning etc.)

2) Higher-order cognitive capabilities and real-world tasks inherently rely on the integrated functioning of multiple subsystems across different modalities.

3) Therefore, our ability to successfully execute complex, cross-modal cognitive tasks serves as an indirect assessment of the reliability of the diverse subsystems enabling that performance.

4) While no single subsystem can be perfectly self-validating in isolation, the convergent agreement across subsystems in facilitating cross-modal tasks provides an inherent check on overall reliability.

5) Hence, by carefully designing and administering cognitive tasks that place demands on multiple modalities simultaneously, we can assess the reliability and integrity of our minds using the very same minds conducting the assessment.

The cross-modal nature of cognition, comprising specialized yet interdependent components, allows us to circumvent the apparent paradox of self-validation. By taxing the system as an integrated whole, the reliable operation of constituent elements can be inferred through their coordinated contributions to successful task performance.

Create a 10-item quiz on the entire thread above.

Here is a 10-item quiz based on the discussion above:

Quiz

- What is the primary goal of cross-modal reliability testing?

- Name one method that can be used to test an elderly individual’s memory.

- What type of testing in software development is similar to cross-modal reliability testing?

- In the context of hardware, what does stress testing evaluate?

- Which task can be used to assess an elderly individual’s executive function?

- What does sensory integration testing in elderly individuals typically involve?

- How does autonomous vehicle sensor fusion validation relate to cognitive cross-modal testing?

- What philosophical concept is associated with using multiple perspectives and methods to converge upon a more reliable truth?

- What kind of philosophical basis supports the argument that using scientifically validated tests and statistical analysis can help assess cognitive reliability?

- How does the ability to adapt based on feedback support the mind’s capability to assess its own reliability?

Answers

- To ensure that a system operates reliably across various conditions and modalities.

- Remembering a list of items or a short story and then recalling it after some time.

- Integration Testing.

- The hardware’s ability to function under high operational demands and various conditions.

- Organizing a drawer or planning a meal for guests.

- Performing tasks that require coordination of sensory input with motor output, such as matching objects to their outlines based only on touch.

- Both involve integrating different types of inputs to make decisions.

- Triangulation.

- Positivism.

- It demonstrates the mind’s capability to learn from mistakes and enhance its own reliability through practical effects and adaptation.

Quiz: Reliability and Assessment

- When we check the time on our watch and compare it to the current lighting conditions, we are essentially relying on the reliability of which two systems? a) Timekeeping system of the watch and our sense of smell b) Timekeeping system of the watch and our sense of sight c) Timekeeping system of the watch and our sense of hearing d) Timekeeping system of the watch and our sense of taste

- The term “cross-modal verification” refers to using information from _________ senses to confirm information. a) all b) one c) two or more d) none of the above

- When assessing brain function using other brain functions, we are limited by: a) our inability to directly observe the brain in real-time b) our emotional state during the assessment c) a lack of standardized tests d) all of the above

- Signal Detection Theory (SDT) is a mathematical framework used to analyze: a) how well we remember grocery lists b) dream interpretation c) the ability to detect a signal amidst noise d) the effectiveness of brain training games

- When trying to assess your dexterity by tying your shoes, what limitation might this approach have? a) It only tests shoe-tying skills and not other aspects of dexterity.

b) It requires too much focus and concentration. c) It is not a real-world task. d) It is not suitable for older adults. - Observing how someone follows complex directions can be an implicit test of which cognitive skills? a) Problem-solving and reasoning only b) Memory and attention span only c) Planning, organization, and ability to follow instructions d) Emotional regulation and creativity

- Successfully navigating to a desired destination using directions is evidence of the reliability of both: a) map reading skills and our sense of balance b) following directions and our ability to assess how well we followed them c) time management skills and our sense of humor d) critical thinking skills and our ability to multitask

- Computers use built-in consistency checks to identify potential errors. This is similar to humans using: a) emotional intelligence b) cross-modal verification c) intuition d) multitasking abilities

- When elderly individuals use a grocery list recall task to assess their cognitive function, they are employing: a) direct memory testing b) cross-modal verification between memory and vision c) a test of pure reasoning ability d) a measure of overall physical health

- The ability to assess the reliability of our own mental processes would likely provide an: a) social disadvantage b) creative disadvantage c) evolutionary disadvantage d) evolutionary advantage

Answers:

- b) Timekeeping system of the watch and our sense of sight

- c) two or more

- d) all of the above

- c) the ability to detect a signal amidst noise

- a) It only tests shoe-tying skills and not other aspects of dexterity.

- c) Planning, organization, and ability to follow instructions

- b) following directions and our ability to assess how well we followed them

- b) cross-modal verification

- b) cross-modal verification between memory and vision

- d) evolutionary advantage

- What is the core idea behind using cross-modal testing to validate the reliability of a system?

- Provide an example of how a computer system or AI could test its speech recognition capabilities using cross-modal validation.

- In the symbolic logic representation, what does the expression “Mi = Ci1 ∩ Ci2 ∩ Ci3 ∩ …” represent?

- True or False: Successfully performing a complex capability C implies the reliability of all underlying modalities Mi and their associated components Cij.

- Give an example of a cross-modal task an elderly individual could perform to assess their cognitive abilities related to memory and problem-solving.

- Which cognitive domains could be tested by having an elderly person put together a jigsaw puzzle?

- What type of game could an elderly individual play to test their ability to multitask and exhibit cognitive flexibility across multiple modalities?

- Engaging in a substantive discussion or debate primarily assesses which cognitive abilities?

- Planning and executing a trip to an unfamiliar location tests which combination of cognitive skills?

- What is the core argument against the notion that we cannot assess the reliability of our own minds using our minds?

Answers:

- By testing an overarching capability that spans multiple modalities, we can implicitly validate the reliability of the individual modules and their interactions.

- The system could play audio recordings with known transcripts and test its speech-to-text capabilities, thereby validating the speech recognition module as well as related subsystems like audio processing and language modeling.

- It represents that for a modality Mi to function reliably, all of its associated components {Ci1, Ci2, Ci3, …} must work correctly.

- True

- Following a recipe or set of instructions that requires remembering sequential steps, comprehending written text, applying reasoning, and executing actions successfully.

- Visual perception, spatial reasoning, fine motor control, and hand-eye coordination.

- A game like bridge that requires keeping track of multiple streams of information, strategizing, computing scores, and potentially socializing.

- Language comprehension and expression, tied to auditory processing, semantic knowledge retrieval, and articulation of coherent verbal responses.

- Visuospatial skills, working memory, and orientation abilities.

- The cross-modal nature of cognition, comprising specialized yet interdependent components, allows us to circumvent the paradox of self-validation by taxing the system as an integrated whole. The reliable operation of constituent elements can be inferred through their coordinated contributions to successful task performance.

Provide 15 discussion questions relevant to the content above.

Here are 15 discussion questions related to the content discussed above:

- How does cross-modal reliability testing enhance the robustness of a system or cognitive function?

- What are the limitations of using one’s own mind to assess its cognitive reliability?

- Can the concept of external verification fully mitigate the biases inherent in self-assessment of cognition? Discuss.

- How might the results of memory tests for the elderly differ if conducted in familiar versus unfamiliar settings?

- What role do technological advancements play in improving the methods for cognitive testing in elderly populations?

- Discuss the importance of consistency in performance across different cognitive functions in assessing overall cognitive health.

- How can the principles of cross-modal reliability testing be applied in educational settings to better understand student learning capabilities?

- What are the ethical considerations in using stress testing in hardware and analogous methods in cognitive testing for humans?

- How does sensory integration testing provide insights into the processing abilities of an elderly individual’s brain?

- Discuss the impact of cultural factors on the reliability of cognitive assessments.

- How do modern autonomous vehicles use sensor fusion to ensure safe navigation, and what can be learned from this for human cognitive testing?

- In what ways do longitudinal studies add value to the understanding of cognitive decline or stability over time?

- How does the philosophical concept of empiricism support the use of historical data in cognitive reliability assessments?

- Can adaptive learning and feedback mechanisms in AI systems be mirrored in strategies for enhancing human cognitive functions? Discuss.

- What practical measures can be taken to improve the reliability of cognitive function tests to avoid false diagnoses or assessments?

These questions are designed to provoke thought and discussion about the multifaceted aspects of cognitive reliability testing, both from a technological and a human-centered perspective.

Discussion Questions: Reliability and Assessment

- Beyond sight and the watch’s timekeeping, can you think of other examples of cross-modal verification in everyday life?

- How might our biases or expectations influence the results of cross-modal verification?

- In the context of brain function assessment, discuss the ethical considerations of using indirect methods instead of direct observation.

- Imagine you’re designing a new cognitive assessment tool. What factors would you consider to ensure its reliability and validity?

- Do you think there are any benefits to using simple tasks like tying shoes to assess dexterity, even with their limitations? Explain your answer.

- Following directions effectively requires multiple cognitive skills. Can you identify situations where successful completion of a task might not necessarily reflect strong performance in all the underlying skills?

- Beyond memory and following directions, what other cognitive skills do you think are implicitly tested when navigating to a new location using instructions?

- When comparing human and computer error detection methods, what are some advantages and disadvantages of each approach?

- Can you think of any creative ways elderly individuals could use cross-modal verification techniques to assess their cognitive function in daily activities?

- Is there a risk of becoming overly reliant on self-assessment tools for cognitive function? Why or why not?

- How might cultural background or language influence someone’s performance on a standardized cognitive test?

- Imagine you suspect a friend or family member might be experiencing cognitive decline. What steps could you take to address your concerns in a sensitive and supportive manner?

- The discussion mentioned “ecological validity” in cognitive assessment. Can you think of examples of assessments that might have low ecological validity and how they could be improved?

- Do you think there will ever be a way to directly measure the “mind itself”? Why or why not? If so, what ethical concerns might arise?

- In your opinion, how important is it for individuals to be aware of the limitations of their own cognitive processes? Explain your answer.

Here are 15 discussion questions related to the content covered:

- Can you think of any other examples where cross-modal validation could be useful beyond testing cognitive systems?

- What are some potential limitations or challenges in designing effective cross-modal tests for complex cognitive abilities?

- How might the cross-modal validation approach be adapted for testing non-cognitive systems or processes?

- In the symbolic logic representation, what assumptions or simplifications were made about the relationships between modalities and components?

- Are there any ethical considerations that should be taken into account when using cross-modal testing on human subjects, particularly for vulnerable populations like the elderly?

- How might advances in technology, such as brain-computer interfaces or virtual/augmented reality, impact the development and administration of cross-modal cognitive assessments?

- Could cross-modal validation be used as a method for continual learning and self-improvement in artificial intelligence systems?

- What role might individual differences (e.g., age, education, culture) play in the design and interpretation of cross-modal cognitive tests?

- How might the cross-modal validation approach be extended to assess the reliability of collective or distributed cognitive systems, such as teams or organizations?

- Are there any potential risks or unintended consequences of relying too heavily on cross-modal testing as a method for self-assessment or self-validation?

- How could cross-modal validation be integrated with other assessment methods or diagnostic tools in clinical or research settings?

- In what ways might the cross-modal validation concept be applicable to fields outside of cognitive science or computer systems, such as psychology, education, or even the arts?

- Could the principles of cross-modal validation be used to develop more effective training or learning programs that target multiple cognitive domains simultaneously?

- How might the cross-modal validation approach need to be adapted or modified for testing systems that incorporate emerging technologies like quantum computing or neuromorphic hardware?

- What are some potential future research directions or open questions related to the theory and application of cross-modal validation across different domains?

Table of Contents: (Click any link below to navigate to that section.)

- Imagine you are testing your ability to follow directions to a location. Arriving at a desired destination is evidence of both the reliability of the specific skill of remembering given directions and the general reliability of the mind enabling it to test such a skill, right?

- Provide other examples of analogous cross-modal reliability tests.

- Provide the logic behind this cross-modal validation of both systems.

- In a more practical arena, how might an elderly individual employ cross-modal testing to assess the current degree of reliability of their cognition?

- In light of this discussion, how might we formulate an argument to counter the notion we cannot assess the reliability of our minds with our minds.

- Create a 10-item quiz on the entire thread above.

- Provide 15 discussion questions relevant to the content above.

Leave a comment