- “The use of a p-value threshold of 0.05 as the standard for statistical significance is a convention that originated from the work of Ronald Fisher in the early 20th century. This threshold is not inherently optimal but has become widely adopted in many fields for determining the statistical significance of results.”

- “Alternatives and Supplements to the P-value: Adjusting the P-value Threshold, Confidence Intervals, Bayesian Statistics, Effect Size and Power Analysis, Pre-registration of Studies.”

- “Some scientific journals and associations have encouraged moving beyond the rigid adherence to the 0.05 threshold. For example, the American Statistical Association (ASA) published a statement in 2016 outlining the limitations of p-values and cautioning against their misuse.”

- “Shifting the focus from binary p-value significance to confidence intervals (CIs) can mitigate several distortive effects associated with the pressure to achieve statistically significant results, commonly referred to as “p-hacking” or the “file drawer problem”.”

Table of Contents: (Click any link below to navigate to that section.)

- Is the arbitrary threshold for statistical significance usually set at a 0.05 p-value the optimal way to indicate a significant relationship between two variables? Have other metrics for significance been proposed?

- Explain how “confidence intervals” work.

- Comment on how reliance on a confidence interval rather than a binary p-value would reduce the distortive effects of the pressure to find a p-value significance.

- Why is the replacement of p-values with confidence intervals so difficult?

- Create a 5-item quiz on our discussion above with answers at the end.

- Provide 15 discussion questions related to the exchange above.

Is the arbitrary threshold for statistical significance usually set at a 0.05 p-value the optimal way to indicate a significant relationship between two variables? Have other metrics for significance been proposed?

The use of a p-value threshold of 0.05 as the standard for statistical significance is a convention that originated from the work of Ronald Fisher in the early 20th century. This threshold is not inherently optimal but has become widely adopted in many fields for determining the statistical significance of results. The choice of 0.05 as a cutoff is somewhat arbitrary and has been a subject of ongoing debate among statisticians and researchers.

- Critiques of the 0.05 Threshold:

- The main critique is that the 0.05 threshold can be arbitrary and may not suit all research contexts. It can lead to overemphasizing the importance of a specific p-value (e.g., 0.049 is deemed significant, while 0.051 is not) without considering the effect size, research design, data quality, or the broader context of the study.

- Additionally, the reliance on the 0.05 threshold may contribute to issues like p-hacking (manipulating data to achieve a p-value below the significance threshold) and publication bias (the tendency to only publish studies with significant results).

- Alternatives and Supplements to the P-value:

- Adjusting the P-value Threshold: Some fields and studies may use more stringent thresholds (e.g., 0.01 or 0.001) to claim statistical significance, especially in contexts where the cost of a false positive is high.

- Confidence Intervals: Presenting confidence intervals alongside p-values provides a range of plausible values for the effect size, offering more information about the precision of the estimate.

- Bayesian Statistics: Bayesian methods incorporate prior knowledge along with the current data to compute the probability of a hypothesis. This approach can provide a more nuanced understanding of the evidence in favor or against a hypothesis.

- Effect Size and Power Analysis: Emphasizing effect size and conducting power analysis can help researchers understand the practical significance of their findings, not just whether they are statistically significant.

- Pre-registration of Studies: Pre-registering hypotheses, methods, and analyses before data collection can help mitigate selective reporting and p-hacking, emphasizing the robustness of findings over the attainment of a specific p-value.

- Recent Discussions and Recommendations:

- Some scientific journals and associations have encouraged moving beyond the rigid adherence to the 0.05 threshold. For example, the American Statistical Association (ASA) published a statement in 2016 outlining the limitations of p-values and cautioning against their misuse. In 2019, a special issue of “The American Statistician” discussed alternatives to the null hypothesis significance testing (NHST) framework and the 0.05 cutoff, advocating for a more holistic approach to evaluating evidence.

In conclusion, while the 0.05 p-value threshold is widely used, it is not universally optimal and should not be the sole criterion for determining statistical significance. A combination of statistical measures and a more nuanced approach to data analysis, considering the context and implications of the research, is advisable for a more accurate interpretation of results.

The traditional use of a 0.05 p-value threshold for statistical significance as the sole indicator of a significant relationship between two variables has indeed been subject to ongoing debate and scrutiny. While it remains a widely used practice, several points highlight its limitations and alternative approaches:

Criticisms of the 0.05 threshold:

- Arbitrary nature: The choice of 0.05 has historical background rather than statistical justification. Other values like 0.01 or 0.10 could be equally valid depending on the research context and potential consequences of errors.

- Focus on binary outcome: It dichotomizes results into “significant” or “not significant,” neglecting the gradient of evidence strength within each category. A p-value of 0.051 is practically indistinguishable from 0.049, yet classified differently.

- Misinterpretations: Misconceptions abound, like equating p-value with the probability of the null hypothesis being true or the effect size of the relationship.

Alternative metrics and approaches:

- Confidence intervals: Provide a range of plausible values for the effect size, offering nuanced information beyond a binary “significant” label.

- Effect size measures: Directly quantify the magnitude of the observed relationship, aiding in interpreting its practical significance. Examples include Cohen’s d, correlation coefficients, or risk ratios.

- Bayesian analysis: Shifts the focus from null hypothesis testing to estimating the probability of the alternative hypothesis being true given the data, incorporating prior knowledge and avoiding strict p-value thresholds.

Choosing the right approach:

The optimal approach depends on various factors, including:

- Research question and objectives: Are you primarily interested in rejecting the null hypothesis or understanding the nature and strength of the relationship?

- Sample size and power: Smaller samples are more prone to misleading p-values. Considering power helps ensure your study has a reasonable chance of detecting a real effect.

- Field-specific conventions and considerations: Some disciplines may have established norms for significance levels or prefer specific effect size measures.

Conclusion:

While the 0.05 p-value threshold has served as a historical benchmark, it’s vital to recognize its limitations and explore alternative metrics like confidence intervals, effect sizes, and Bayesian analysis. The most appropriate approach depends on the specific research context and objectives, aiming for a comprehensive understanding of the relationship between variables rather than relying solely on dichotomous significance labels.

Explain how “confidence intervals” work.

Confidence intervals (CIs) provide a range of values within which the true value of a parameter (e.g., the mean, difference between means, proportion, effect size) is expected to fall with a certain level of confidence. Unlike a p-value, which offers a test for hypothesis about whether an effect exists, confidence intervals provide information about the size and direction of an effect, as well as the precision of the statistical estimate.

1. Definition and Interpretation:

- A confidence interval is constructed around a sample statistic (e.g., sample mean) to estimate the range of values that is likely to include the population parameter it estimates.

- The most common confidence level used is 95%, though other levels (e.g., 90%, 99%) can also be used depending on the research context. A 95% CI means that if the same population were sampled many times and intervals calculated, approximately 95% of those intervals would contain the true population parameter.

2. Calculating Confidence Intervals:

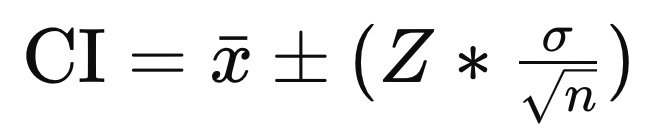

The method for calculating a CI depends on the parameter being estimated and the distribution of the data, but the general formula for a confidence interval for a mean, when the population standard deviation is known and the sample size is large enough for the central limit theorem to apply, is:

- ( x̄ ) is the sample mean.

- ( Z ) is the Z-score associated with the desired confidence level (e.g., 1.96 for 95% confidence).

- ( σ ) is the population standard deviation.

- ( n ) is the sample size.

For small samples or when the population standard deviation is unknown, the t-distribution is used instead of the normal distribution, and the sample standard deviation replaces the population standard deviation in the formula.

3. Interpreting Confidence Intervals:

- If a 95% CI for a mean difference between two groups is [1.2, 3.5], this suggests that we are 95% confident the true mean difference lies between 1.2 and 3.5. If the interval does not contain 0, it suggests a statistically significant difference at the 5% level.

- A narrower interval indicates a more precise estimate of the parameter, while a wider interval suggests less precision, often due to a small sample size or high variability in the data.

4. Applications and Limitations:

- Applications: CIs are widely used in research to assess the reliability of an estimate. They are particularly useful in health sciences for estimating effect sizes, differences between groups, and association measures.

- Limitations: The interpretation of confidence intervals is sometimes misunderstood. A 95% CI does not mean that there is a 95% probability that the interval contains the true parameter value in a frequentist sense. Instead, it reflects the proportion of such intervals that would contain the parameter if the experiment were repeated under the same conditions.

In summary, confidence intervals offer a useful tool for understanding the precision and reliability of an estimate, providing more information than a simple point estimate or a p-value. They help researchers communicate the uncertainty in their findings and make more informed decisions based on statistical analysis.

Confidence intervals are a powerful tool in statistics to express the uncertainty around an estimate. Imagine you want to estimate the average height of all students in your school. You can’t measure everyone, so you take a random sample of students and calculate their average height. This is your point estimate, but wouldn’t it be more realistic to acknowledge that the true average height for the entire school population might be slightly different? This is where confidence intervals come in.

Here’s how they work:

- Calculate the point estimate: This could be a mean, median, proportion, or any other relevant statistic based on your sample.

- Choose a confidence level: This is the percentage of times you want your interval to capture the true population parameter. Common choices are 90%, 95%, and 99%.

- Calculate the margin of error: This value represents the amount of uncertainty around your point estimate. It depends on the sample size, variability within the sample, and the chosen confidence level. Higher confidence levels lead to wider margins of error and vice versa.

- Construct the interval: Add and subtract the margin of error from your point estimate. This gives you the lower and upper bounds of your confidence interval.

Interpretation:

- If you were to repeat the sampling process many times and calculate a confidence interval for each sample, about (confidence level)% of those intervals would capture the true population parameter.

- The wider the interval, the greater the uncertainty in your estimate. Smaller samples usually lead to wider intervals as they provide less information about the population.

Example:

Suppose you collect a random sample of 100 students and find their average height to be 170 cm. With a 95% confidence level, you calculate a margin of error of 2 cm. Your confidence interval would be 168 cm – 172 cm. This means you are 95% confident that the true average height of all students in your school lies somewhere between 168 cm and 172 cm.

Remember:

- Confidence intervals only tell you about the plausible range for the population parameter, not the exact value.

- They represent uncertainty due to random sampling, not other sources of error in your study design or measurement.

- Using confidence intervals alongside other statistics like effect sizes helps paint a more complete picture of your results.

I hope this explanation clarifies how confidence intervals work! Feel free to ask if you have any further questions.

Comment on how reliance on a confidence interval rather than a binary p-value would reduce the distortive effects of the pressure to find a p-value significance.

Shifting the focus from binary p-value significance to confidence intervals (CIs) can mitigate several distortive effects associated with the pressure to achieve statistically significant results, commonly referred to as “p-hacking” or the “file drawer problem”. This pressure often incentivizes practices that compromise the integrity of scientific research. Here’s how reliance on CIs can address these issues:

- Enhanced Interpretation of Results:

- CIs provide a range of plausible values for the parameter being estimated, offering a richer context for interpretation. Unlike a p-value, which merely tells you whether an effect is statistically significant without indicating the size or importance of the effect, CIs inform about the effect size and the precision of the estimate. This encourages a more nuanced interpretation of results, focusing on the practical significance of the findings rather than just statistical significance.

- Reduction in Binary Thinking:

- P-values encourage a binary view of research findings: significant or not significant. This dichotomy can be misleading, as it ignores the continuous nature of evidence. CIs, by contrast, emphasize the uncertainty and variability in the data, shifting the narrative from a simplistic binary outcome to a more complex, but accurate, representation of reality.

- Discouragement of P-hacking:

- P-hacking involves selectively reporting results or manipulating data analysis until statistically significant results are found. Since CIs focus on estimating the range within which the true effect lies, there’s less incentive to manipulate data to cross the arbitrary threshold of p < 0.05. Researchers might feel less compelled to engage in questionable research practices when the emphasis is on estimation and uncertainty rather than significance.

- Mitigation of Publication Bias:

- The obsession with p-values can lead to publication bias, where studies with significant results are more likely to be published than those without. Shifting focus to CIs can help alleviate this bias. Studies with wider CIs, indicating more uncertainty, can still contribute valuable information about the variability and reliability of the effect sizes, making the findings worthy of publication and discussion, regardless of p-value significance.

- Encouragement of Robust Research Practices:

- Emphasizing CIs can promote more robust research practices, such as pre-registration of studies and specifying confidence levels a priori. These practices can help in clarifying the expected precision of the estimates before data collection, reducing the flexibility in data analysis that often leads to p-hacking.

- Improvement in Replicability:

- By providing a clearer picture of the uncertainty and variability associated with estimates, CIs can help in assessing the replicability of findings. If subsequent studies’ CIs overlap with those of the original study, it suggests consistency in the findings, thereby supporting the reliability of the results.

In summary, prioritizing confidence intervals over binary p-value significance can enhance the quality and integrity of scientific research. It encourages a more accurate interpretation of data, reduces incentives for questionable research practices, and promotes a culture of transparency and robustness in scientific communication.

Confidence intervals are a powerful tool in statistics to express the uncertainty around an estimate. Imagine you want to estimate the average height of all students in your school. You can’t measure everyone, so you take a random sample of students and calculate their average height. This is your point estimate, but wouldn’t it be more realistic to acknowledge that the true average height for the entire school population might be slightly different? This is where confidence intervals come in.

Here’s how they work:

- Calculate the point estimate: This could be a mean, median, proportion, or any other relevant statistic based on your sample.

- Choose a confidence level: This is the percentage of times you want your interval to capture the true population parameter. Common choices are 90%, 95%, and 99%.

- Calculate the margin of error: This value represents the amount of uncertainty around your point estimate. It depends on the sample size, variability within the sample, and the chosen confidence level. Higher confidence levels lead to wider margins of error and vice versa.

- Construct the interval: Add and subtract the margin of error from your point estimate. This gives you the lower and upper bounds of your confidence interval.

Interpretation:

- If you were to repeat the sampling process many times and calculate a confidence interval for each sample, about (confidence level)% of those intervals would capture the true population parameter.

- The wider the interval, the greater the uncertainty in your estimate. Smaller samples usually lead to wider intervals as they provide less information about the population.

Example:

Suppose you collect a random sample of 100 students and find their average height to be 170 cm. With a 95% confidence level, you calculate a margin of error of 2 cm. Your confidence interval would be 168 cm – 172 cm. This means you are 95% confident that the true average height of all students in your school lies somewhere between 168 cm and 172 cm.

Remember:

- Confidence intervals only tell you about the plausible range for the population parameter, not the exact value.

- They represent uncertainty due to random sampling, not other sources of error in your study design or measurement.

- Using confidence intervals alongside other statistics like effect sizes helps paint a more complete picture of your results.

I hope this explanation clarifies how confidence intervals work! Feel free to ask if you have any further questions.

Why is the replacement of p-values with confidence intervals so difficult?

The replacement of p-values with confidence intervals (CIs) as the primary metric for statistical significance in research findings faces several challenges, despite the recognized benefits of CIs for providing richer information about the data. The difficulty in making this transition can be attributed to a combination of factors related to tradition, education, and the structural aspects of scientific research and publication. Here are some key reasons why this shift is challenging:

- Tradition and Inertia:

- P-values have been deeply ingrained in the statistical methodology of many fields for decades. This long-standing tradition has created a sense of inertia, making it difficult to shift towards alternative methods. Researchers, reviewers, and editors are accustomed to the conventional p-value framework for hypothesis testing, making it challenging to adopt new standards.

- Misunderstanding and Education:

- There is a widespread misunderstanding of both p-values and CIs among researchers. Many do not fully grasp the nuances of statistical significance, effect size, and confidence levels. The educational system may not sufficiently emphasize the interpretation and importance of CIs, leading to a lack of familiarity and comfort among researchers in applying these concepts effectively.

- Journal and Peer Review Expectations:

- The scientific publishing industry and peer review processes have historically emphasized p-values as the criterion for statistical significance and publication worthiness. Journals often require significant p-values for acceptance, which pressures researchers to focus on p-values over other statistical measures. Changing these entrenched expectations requires a systemic shift in the academic culture and publication standards.

- Simplicity and Convenience of P-values:

- P-values provide a simple, if not simplistic, binary outcome that can be easily interpreted as “significant” or “not significant.” This simplicity is appealing for making quick decisions about research findings, even if it reduces the complexity of the data to a misleading dichotomy. CIs, while more informative, require a more nuanced interpretation that may be viewed as less convenient.

- Lack of Consensus on Alternatives:

- While many statisticians and researchers advocate for the use of CIs over p-values, there is no universal agreement on the best alternative approach. Some suggest enhancing p-value reporting with CIs, while others propose more radical shifts towards Bayesian statistics or other methodologies. The lack of a clear, consensus-driven alternative complicates efforts to move away from p-values.

- Resistance to Change:

- Any significant change in scientific practice faces resistance due to the human tendency to stick with known and trusted methods. Researchers who have built their careers using p-values may be particularly reluctant to adopt new statistical approaches, fearing that it may invalidate previous work or require learning new statistical methods.

- Statistical Software and Tools:

- Many statistical software packages and tools are designed with p-value calculations as a central feature, making it easy for researchers to default to using p-values. While these tools also calculate CIs, the prominence and familiarity of p-value functions may perpetuate their use.

Overcoming these challenges requires concerted efforts across the scientific community, including changes in statistical education, modifications to journal submission guidelines, and a cultural shift towards valuing a more nuanced interpretation of statistical results. Such a transition not only involves adopting CIs but also embracing a broader perspective on statistical evidence that accommodates the complexity and uncertainty inherent in scientific research.

Replacing p-values with confidence intervals isn’t just a simple swap, and there are several reasons why it’s a complex transition:

Cultural and historical inertia:

- P-values have been deeply ingrained in scientific culture for decades, forming the basis of grant funding, publication decisions, and career advancement. Changing this ingrained practice requires a significant shift in mindsets and established workflows.

- Statistical education often emphasizes p-values, leading researchers to feel less comfortable and familiar with using confidence intervals effectively.

Interpretive challenges:

- Confidence intervals require a different interpretative lens than p-values. Moving beyond a binary “significant/not significant” framework demands deeper understanding of uncertainty and its implications.

- Communicating confidence intervals to non-statistician audiences can be challenging. Simplifying the explanation without losing nuance is crucial for broader understanding of research findings.

Technical limitations:

- Some complex research designs or rare events might not offer readily interpretable confidence intervals. In such cases, p-values can still be useful as supplementary information.

- Software tools and reporting protocols often cater to p-values as the primary output. Adapting existing software and data visualization techniques to effectively represent confidence intervals takes time and effort.

Resistance to change:

- Researchers who have built their careers and reputations on p-values might be hesitant to adopt a new paradigm. Overcoming established practices requires addressing potential anxieties and demonstrating the added value of confidence intervals.

- Funding agencies and journals might require adjustments to their review processes if they shift focus from p-values to confidence intervals. This necessitates open discussions and collaborative efforts to implement effective changes.

Ultimately, replacing p-values with confidence intervals isn’t a binary choice but rather a multi-faceted process. It requires ongoing education, cultural shifts, technical advancements, and collaborative efforts across various stakeholders in the scientific community. However, the potential benefits of a more nuanced and informative approach to statistical analysis make it a worthwhile pursuit.

Create a 5-item quiz on our discussion above with answers at the end.

Quiz: Understanding Statistical Significance, P-values, and Confidence Intervals

Question 1: What is the primary critique of using a p-value threshold of 0.05 for determining statistical significance?

A) It is too difficult to achieve in most studies.

B) It overemphasizes the importance of achieving a specific cutoff, leading to potential misinterpretation of data.

C) It underestimates the effect size in large samples.

D) It is too lenient, allowing too many false positives.

Question 2: Which of the following is NOT a benefit of using confidence intervals over p-values?

A) They provide a range of plausible values for the estimated parameter.

B) They completely eliminate the need for hypothesis testing.

C) They offer information about the precision of the estimate.

D) They can indicate the practical significance of findings.

Question 3: Why is it difficult to replace p-values with confidence intervals in research practice?

A) Researchers fully understand confidence intervals but prefer p-values.

B) Tradition and inertia within the scientific community.

C) Confidence intervals are more difficult to calculate.

D) P-values offer less information and therefore are less useful.

Question 4: How do confidence intervals help in understanding the replicability of findings?

A) They provide a binary outcome of replicability.

B) If CIs from subsequent studies overlap with those of the original study, it suggests consistency in the findings.

C) They ensure that findings will be replicated in all future studies.

D) They show the exact p-value needed for replication.

Question 5: What is a significant challenge in shifting from p-values to a more nuanced approach like confidence intervals according to the discussion?

A) The universal agreement on the best alternative to p-values.

B) The lack of software tools for calculating confidence intervals.

C) The simplicity and convenience of p-values for making quick decisions.

D) The overemphasis on Bayesian statistics as the only alternative.

Answers:

Question 1: B) It overemphasizes the importance of achieving a specific cutoff, leading to potential misinterpretation of data.

Question 2: B) They completely eliminate the need for hypothesis testing.

Question 3: B) Tradition and inertia within the scientific community.

Question 4: B) If CIs from subsequent studies overlap with those of the original study, it suggests consistency in the findings.

Question 5: C) The simplicity and convenience of p-values for making quick decisions.

Quiz: Beyond P-Values: Understanding Confidence Intervals

Instructions: Choose the best answer for each question.

- What is the main limitation of relying solely on a p-value of 0.05 for statistical significance?

- (a) It requires complex calculations and advanced statistical knowledge.

- (b) It focuses on a binary outcome, neglecting the strength of the relationship.

- (c) It is only applicable to specific research fields and not generalizable.

- (d) It prioritizes finding “significant” results over unbiased analysis.

- What information does a confidence interval provide that a p-value doesn’t?

- (a) The specific probability of the null hypothesis being true.

- (b) A range of plausible values for the effect size.

- (c) Whether the research findings are publishable or not.

- (d) The exact sample size required for the study.

- Why might researchers be hesitant to switch from p-values to confidence intervals?

- (a) Confidence intervals are more difficult to calculate and interpret.

- (b) They are not compatible with existing statistical software tools.

- (c) Shifting away from established practices can be uncomfortable.

- (d) They offer no additional benefits beyond p-values.

- What is one potential benefit of using confidence intervals in scientific communication?

- (a) They simplify complex statistical concepts for a wider audience.

- (b) They eliminate the pressure to publish only “positive” results.

- (c) They allow for easier comparison of results across different studies.

- (d) They guarantee the accuracy and generalizability of research findings.

- What is the key takeaway from our discussion about p-values and confidence intervals?

- (a) Confidence intervals are always superior to p-values in all research scenarios.

- (b) P-values should be completely abandoned in favor of confidence intervals.

- (c) Understanding both p-values and confidence intervals is crucial for informed interpretation of research findings.

- (d) Statistical significance is the most important factor in evaluating research quality.

Answers:

- (b)

- (b)

- (c)

- (c)

- (c)

Provide 15 discussion questions related to the exchange above.

- What are the limitations of using a 0.05 p-value threshold for determining statistical significance, and how might these limitations impact scientific research?

- How do confidence intervals provide more information than p-values, and why is this important for interpreting research findings?

- Discuss the concept of p-hacking and its implications for the credibility of scientific research. How does an overreliance on p-values contribute to this issue?

- Explain the role of effect size in the interpretation of statistical results. Why should researchers consider effect size in addition to statistical significance?

- How can the use of confidence intervals mitigate publication bias in scientific research?

- Consider the challenges associated with shifting from p-values to confidence intervals in academic publishing. What steps can journals and reviewers take to facilitate this transition?

- What educational changes are needed to improve researchers’ understanding and use of confidence intervals?

- Discuss how the misuse of p-values can lead to misleading conclusions in research studies. Provide examples where appropriate.

- How does the reliance on binary outcomes of statistical tests (e.g., significant/not significant) oversimplify the complexity of research findings?

- Examine the implications of adjusting the p-value threshold for significance (e.g., to 0.01 or 0.001) in high-stakes research fields. What are the potential benefits and drawbacks?

- Discuss the potential of Bayesian statistics as an alternative to traditional p-value-based hypothesis testing. What are the advantages and challenges of adopting Bayesian methods?

- How does pre-registration of studies contribute to the integrity of research findings, and how can it complement the use of confidence intervals?

- Reflect on the cultural and systemic barriers within the scientific community that resist the move away from p-values. How can these barriers be overcome?

- Evaluate the statement: “A confidence interval that does not overlap with zero indicates a statistically significant effect.” Discuss the accuracy and potential misconceptions associated with this interpretation.

- How can statistical software and tools be improved to encourage the use of confidence intervals and other measures beyond p-values in research analysis?

Discussion Questions: Beyond P-Values and Confidence Intervals

- Do you think the pressure to publish “significant” results is a major problem in scientific research? Why or why not?

- What are some potential consequences of relying solely on p-values to assess research findings?

- In your opinion, what are the biggest challenges to implementing confidence intervals as the primary metric for statistical significance?

- How can we encourage researchers and reviewers to embrace confidence intervals alongside p-values for a more nuanced understanding of results?

- Do you think using confidence intervals could help improve scientific communication and public understanding of research? Explain your answer.

- Are there specific research fields or methodologies where p-values might still be preferable or informative? Discuss.

- Beyond replacing p-values, what other changes are needed to promote a more ethical and rigorous culture of scientific research?

- How can we address the potential anxieties or resistance researchers might have when transitioning to a focus on confidence intervals?

- What role can statistical software developers and journals play in facilitating the adoption of confidence intervals?

- Do you think open access publishing and data sharing could contribute to overcoming the limitations of p-values? Why or why not?

- In your view, what are the ethical implications of manipulating data or analysis methods to achieve a “significant” p-value?

- How can we promote critical thinking and skepticism towards published research findings, regardless of the chosen statistical metrics?

- What educational initiatives could help improve scientific literacy and equip the public to better understand and interpret research results?

- Beyond research, are there other areas where a shift away from binary outcomes and towards embracing uncertainty could be beneficial? Discuss.

- Do you think the increasing focus on data science and machine learning will further challenge the traditional reliance on p-values? Why or why not?

These are just some prompts to get your discussion started. Feel free to modify them, add your own questions, and explore related topics!

Table of Contents: (Click any link below to navigate to that section.)

- Is the arbitrary threshold for statistical significance usually set at a 0.05 p-value the optimal way to indicate a significant relationship between two variables? Have other metrics for significance been proposed?

- Explain how “confidence intervals” work.

- Comment on how reliance on a confidence interval rather than a binary p-value would reduce the distortive effects of the pressure to find a p-value significance.

- Why is the replacement of p-values with confidence intervals so difficult?

- Create a 5-item quiz on our discussion above with answers at the end.

- Provide 15 discussion questions related to the exchange above.

Leave a comment